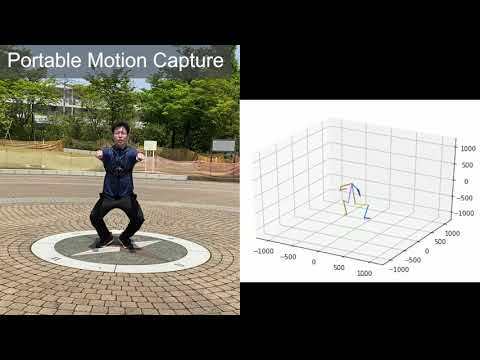

MonoEye: Multimodal Human Motion Capture System Using A Single Ultra-Wide Fisheye Camera

Published in The 33rd Annual ACM Symposium on User Interface Software and Technology (ACM UIST 2020, Full paper), 2020

Recommended citation: Hwang et al. "MonoEye: Multimodal Human Motion Capture System Using A Single Ultra-Wide Fisheye Camera." Proceedings of the 33rd Annual ACM Symposium on User Interface Software and Technology. 2020. https://dl.acm.org/doi/abs/10.1145/3379337.3415856

Authors

Dong-Hyun Hwang, Kohei Aso, Ye Yuan, Kris Kitani, Hideki Koike*

*Tokyo Institute of Technology, **Carnegie Mellon University

Abstract

We present MonoEye, a multimodal human motion capture system using a single RGB camera with an ultra-wide fisheye lens, mounted on the user’s chest. Existing optical motion capture systems use multiple cameras, which are synchronized and require camera calibration. These systems also have usability constraints that limit the user’s movement and operating space. Since the MonoEye system is based on a wearable single RGB camera, the wearer’s 3D body pose can be captured without space and environment limitations. The body pose, captured with our system, is aware of the camera orientation and therefore it is possible to recognize various motions that existing egocentric motion capture systems cannot recognize. Furthermore, the proposed system captures not only the wearer’s body motion but also their viewport using the head pose estimation and an ultra-wide image. To implement robust multimodal motion capture, we design three deep neural networks: BodyPoseNet, HeadPoseNet, and CameraPoseNet, that estimate 3D body pose, head pose, and camera pose in real-time, respectively. We train these networks with our new extensive synthetic dataset providing 680K frames of renderings of people with a wide range of body shapes, clothing, actions, backgrounds, and lighting conditions. To demonstrate the interactive potential of the MonoEye system, we present several application examples from common body gestural to context-aware interactions.