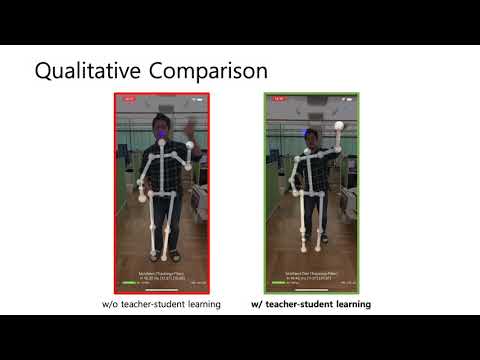

Lightweight 3D Human Pose Estimation Network Training Using Teacher-Student Learning

Published in IEEE/CVF Winter Conference on Applications of Computer Vision 2020 (IEEE/CVF WACV 2020, Full paper), 2020

Recommended citation: Hwang et al. "Lightweight 3D human pose estimation network training using teacher-student learning." Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision. 2020. https://openaccess.thecvf.com/content_WACV_2020/html/Hwang_Lightweight_3D_Human_Pose_Estimation_Network_Training_Using_Teacher-Student_Learning_WACV_2020_paper.html

Authors

Dong-Hyun Hwang, Suntae Kim, Nicolas Monet, Hideki Koike*, and Soonmin Bae**

Tokyo Institute of Technology, ** Clova AI Video (Current Avatar), NAVER Corp., ** NAVER Labs Europe

Abstract

We present MoVNect, a lightweight deep neural network to capture 3D human pose using a single RGB camera. To improve the overall performance of the model, we apply the teacher-student learning method based knowledge distillation to 3D human pose estimation. Real-time post-processing makes the CNN output yield temporally stable 3D skeletal information, which can be used in applications directly. We implement a 3D avatar application running on mobile in real-time to demonstrate that our network achieves both high accuracy and fast inference time. Extensive evaluations show the advantages of our lightweight model with the proposed training method over previous 3D pose estimation methods on the Human3.6M dataset and mobile devices.